TL;DR: A “Skill” in production AI is not a saved prompt — it’s a capability abstraction layer with a defined input schema, tool bindings, validation, and retry logic. This post explains why that distinction matters and how Skills fit between raw model calls and higher-level agent orchestration.

This post is part of a series on building real AI systems. If you haven’t read the previous piece on moving beyond prompts, that’s a good place to start.

Introduction

Most people treat AI skills as glorified prompt templates.

Honestly, that’s fair. A lot of products market “skills” as saved instructions with a nicer UI. You click a button, it loads some text into the system prompt, done.

But in a real production system, a Skill is something different.

It is a capability abstraction layer—sitting between raw model calls and the higher-level orchestration that actually does something useful. If prompts are like making direct API calls, Skills are the first layer of actual software architecture you build on top of them.

That distinction sounds abstract right now. It starts to matter a lot once your system gets complicated.

Prompt Templates Fall Apart Eventually

Prompt templates are fine to start with. Quick to write, easy to understand, and they work.

Until they don’t.

Say you’re building a basic content pipeline:

Research topic

→ Draft outline

→ Write article

→ Review tone

→ Check SEO

→ Generate metadata

Six steps. You write six prompts. Seems manageable.

A few months later, the pain starts showing up in small ways:

- You copy-paste prompt logic into a new workflow and tweak it slightly. Then again. Now you have three versions that are almost identical but not quite.

- Someone changes the output format for one prompt. Two other steps break silently.

- You add a tool integration to one flow. You realize you need the same thing in three other flows and now you’re doing it manually each time.

- Retry logic gets bolted onto individual prompts in different ways depending on who wrote them.

None of these problems are catastrophic on their own. Together, they quietly accumulate until you realize you’re maintaining a pile of interconnected prompt strings that nobody fully understands anymore.

That’s the moment when prompts stop being instructions and start being infrastructure—but without any of the structure that makes infrastructure manageable.

This is where Skills become necessary.

A Skill Is a Capability Contract

Here’s a useful way to think about it:

A Skill is a standardized interface around a model capability.

Not around a prompt. Around a capability.

In practice, a properly designed Skill specifies a lot more than the prompt text itself:

name: blog_writer

inputs:

- topic

- audience

- tone

tools:

- web_search

- knowledge_base

constraints:

- markdown_output

- seo_friendly

- no_ai_tone

validation:

- min_word_count

- heading_structure_check

retry_policy:

max_attempts: 2

Once you’re writing something like this, you’re not really doing prompt engineering anymore. You’re doing interface design.

The prompt probably still exists somewhere inside the implementation. But from the outside, what you’ve defined is:

Input schema → Capability execution → Validated output

That’s much closer to an API or a microservice than a prompt. And it behaves like one—you can test it independently, version it, swap the implementation without changing the callers.

Why This Actually Matters for Workflows

The main reason Skills are useful is that workflows need stable interfaces to call into.

Without that abstraction, every workflow ends up owning its own prompting logic:

Workflow step 1: raw prompt A

Workflow step 2: raw prompt B

Workflow step 3: raw prompt C

Which means your orchestration logic and your model interaction logic are completely tangled together. Changing one touches the other. Reusing anything requires copy-pasting.

With properly defined Skills, a workflow looks more like this:

research_skill()

summarize_skill()

outline_skill()

writer_skill()

Now the workflow doesn’t care how writer_skill works internally. It just knows what it takes and what it produces. You can improve the skill, swap the model, change the prompt—the workflow doesn’t need to know.

Some other things this unlocks: you can attach logging and metrics at skill boundaries instead of burying them in prompts. You can run evaluations on individual skills. Multiple workflows can call the same skill without duplicating logic. Teams can work on skills independently without stepping on each other’s orchestration code.

It’s modular design. The same reasons it works in software apply here.

You Can Get the Granularity Wrong

Skills aren’t free. Over-abstracting creates its own problems, and it happens a lot when teams first discover this pattern.

If you cut things too fine:

title_generator_skill

intro_writer_skill

paragraph_writer_skill

conclusion_writer_skill

Now you’ve created a pile of tiny skills that don’t do anything useful on their own and require elaborate orchestration to combine. You’ve traded one mess for a different mess.

Go too broad instead:

content_engine_skill

And you’ve got a black box that’s hard to test, hard to improve, and not actually reusable across contexts.

The rule is the same as software modularity has always been: a Skill should represent one reusable capability boundary. Not one prompt. Not an entire workflow. One coherent capability.

Getting that boundary right takes some iteration. That’s normal.

Where Skills Sit in the Stack

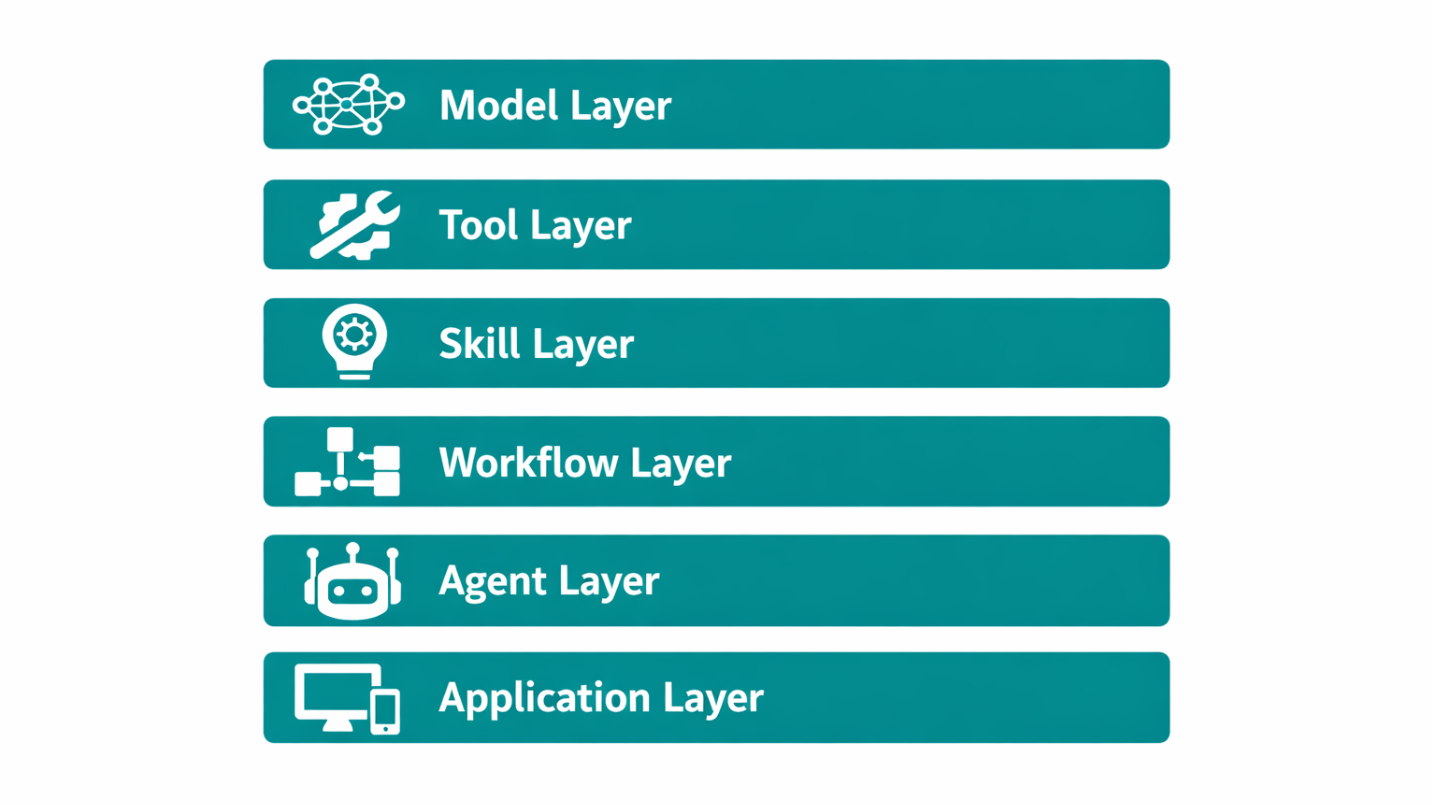

A practical architecture for a production AI system usually looks something like this:

Each layer has a distinct job:

| Layer | Responsibility |

|---|---|

| Model | Generate / reason |

| Tool | External actions / data access |

| Skill | Encapsulate reusable capability |

| Workflow | Orchestrate deterministic processes |

| Agent | Handle dynamic decision-making |

| Application | User-facing product logic |

The reason this layering matters is that a common mistake in AI product design is collapsing everything into the model layer—treating model behavior as the architecture itself. That works for demos. It falls apart as soon as you need to maintain, test, or scale anything.

The model is one layer. A useful one, obviously. But still just one layer.

The Shift from Prompting to Engineering

There’s a specific moment in building AI systems where you stop interacting with models and start designing machine capabilities. It’s not always obvious when it happens, but you usually notice it in retrospect.

Skills are often where that shift shows up concretely.

Before Skills, most of your effort goes into getting the model to do the right thing in a given context. After Skills, you’re thinking about interfaces, contracts, validation, reuse—the same concerns that show up in any software system.

The underlying craft changes. It’s still prompt engineering in places, but the bigger picture is system design.

Conclusion

Prompting is still useful. It’s the basic interaction primitive for working with AI models and it’s not going away.

But prompts alone don’t constitute architecture.

When you need your system to be maintainable, testable, and reusable—when multiple workflows need to share behavior, when you need consistent tooling and validation across the system—you need an abstraction layer above raw prompting.

That’s the Skill layer.

The short version:

Prompts help you use AI.

Skills help you build with AI.

Once you’re building with AI, you’re doing system design. The sooner you design the system intentionally, the less you’ll be untangling it later.

Skills are the capability layer. The layer above — orchestrating agents to do real work — is where governance starts to matter. If you’ve hit unpredictable agent behavior, Why AI Agents Go Wrong: It’s Not the Model covers why most of those failures are structural, not capability, problems.

FAQ

Q: What is an AI Skill?

An AI Skill is a reusable capability abstraction that wraps model behavior with defined input/output schemas, tool access, validation, and retry logic. Unlike a prompt, it exposes a stable interface that other system components can depend on.

Q: What’s the difference between a prompt and a skill?

A prompt is a raw instruction sent to a model. A Skill is a structured capability contract—it defines inputs, tooling, constraints, and validation around that interaction, making it reusable, testable, and composable within larger systems.

Q: When should I use Skills instead of prompts?

When you need the same behavior across multiple workflows, when you want to test or evaluate model behavior independently, or when you need consistent tooling and retry logic across your system. If you’re using a prompt in more than one place, it’s probably worth wrapping it into a Skill.

Q: How do Skills relate to Agents and Workflows?

Skills sit between the tool layer and the workflow layer. Workflows orchestrate sequences of Skill calls. Agents add dynamic decision-making on top of that—choosing which Skills to invoke based on context. Skills are the stable building blocks both depend on.

Read next

- Skill Design as Interface Design — A skill behaves predictably to the exact degree its boundary is specified

- Why AI Agents Go Wrong: It’s Not the Model — Most agent failures aren’t model problems, they’re governance problems

- Token Economics of AI Agent Governance — Governance has a bounded, knowable token cost; ungoverned work doesn’t